Laboratory for Digitalisation — Prof. Dr. Wolfgang Mauerer

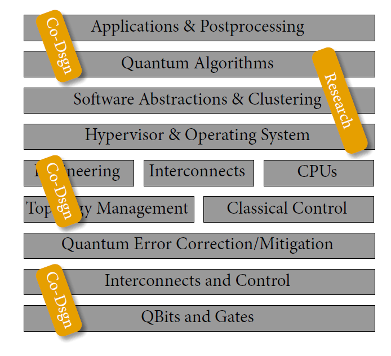

The Laboratory for Digitalisation primarily focuses on the intersection between three research areas: Quantum Computing, Systems Engineering, and Software Engineering. Future computing systems will leverage non-classical algorithms, and their hardware and software architectures need to combine advantages of classical and quantum processing units. Consequently, scientific progress needs interdisciplinary thinking across fields now more than ever. The group seeks cross-cutting answers to highly topical scientific questions and participates in active transfer into applications.

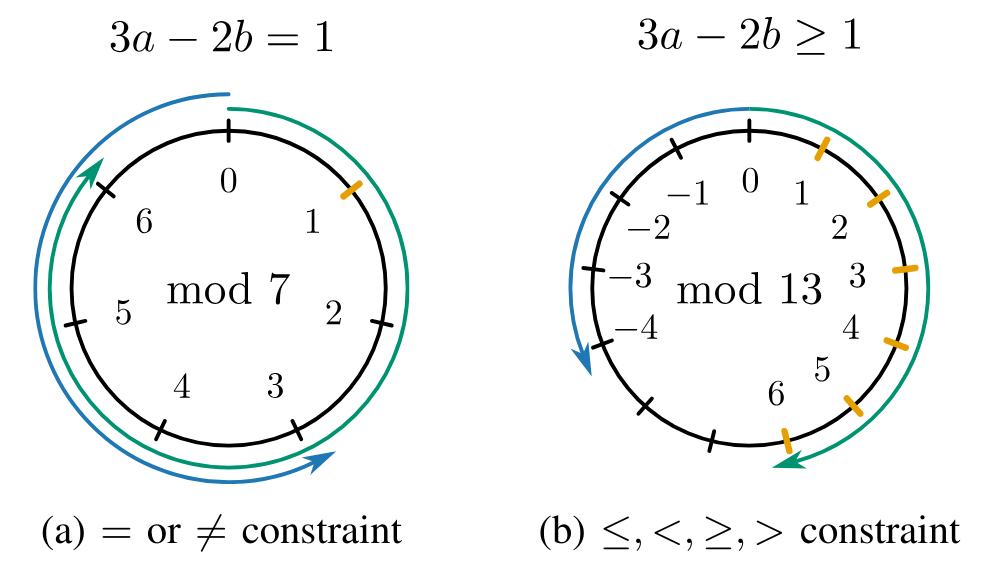

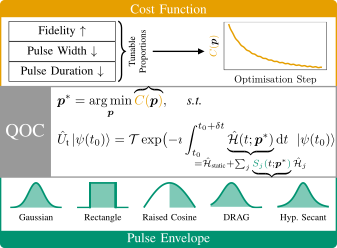

Quantum Computing

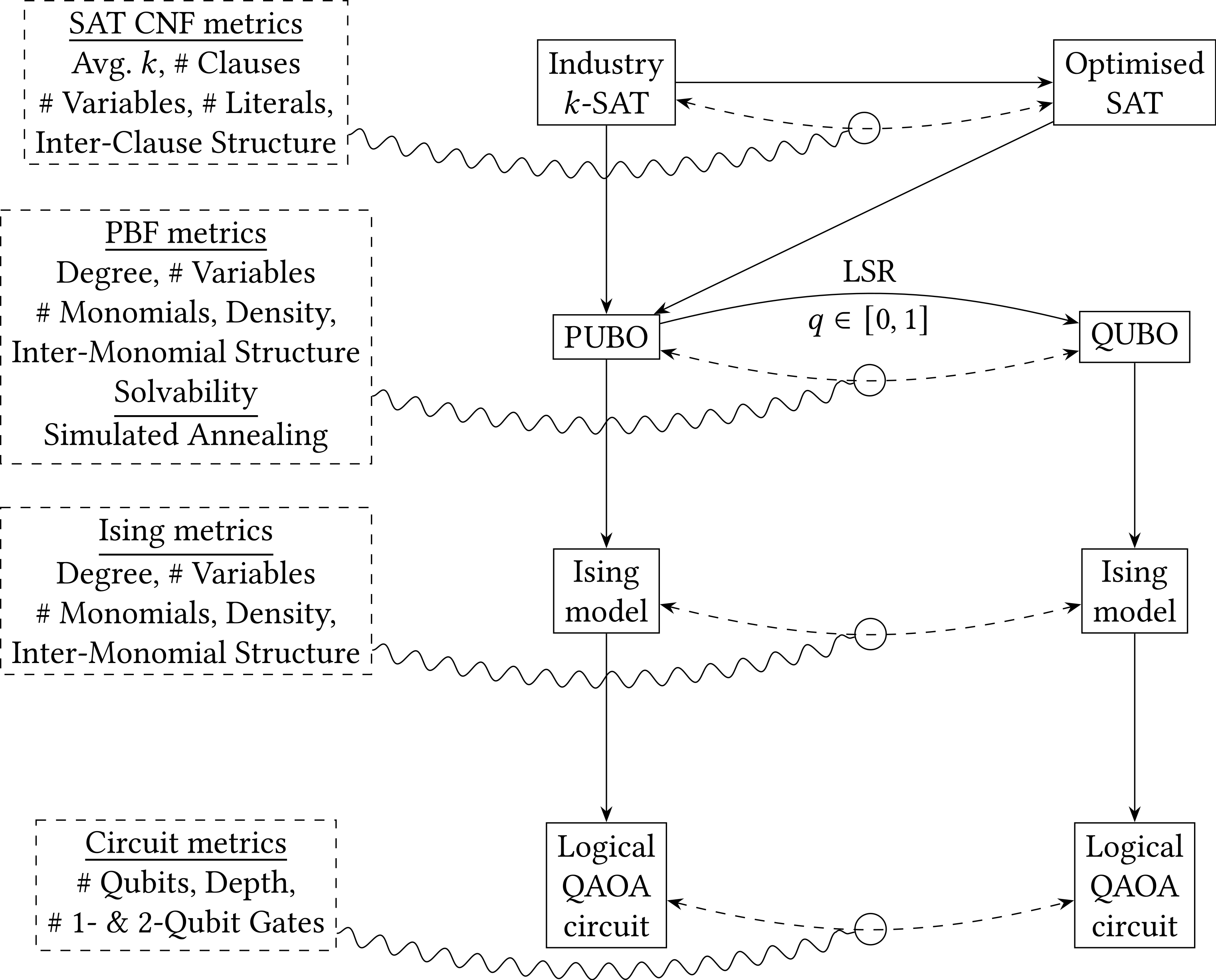

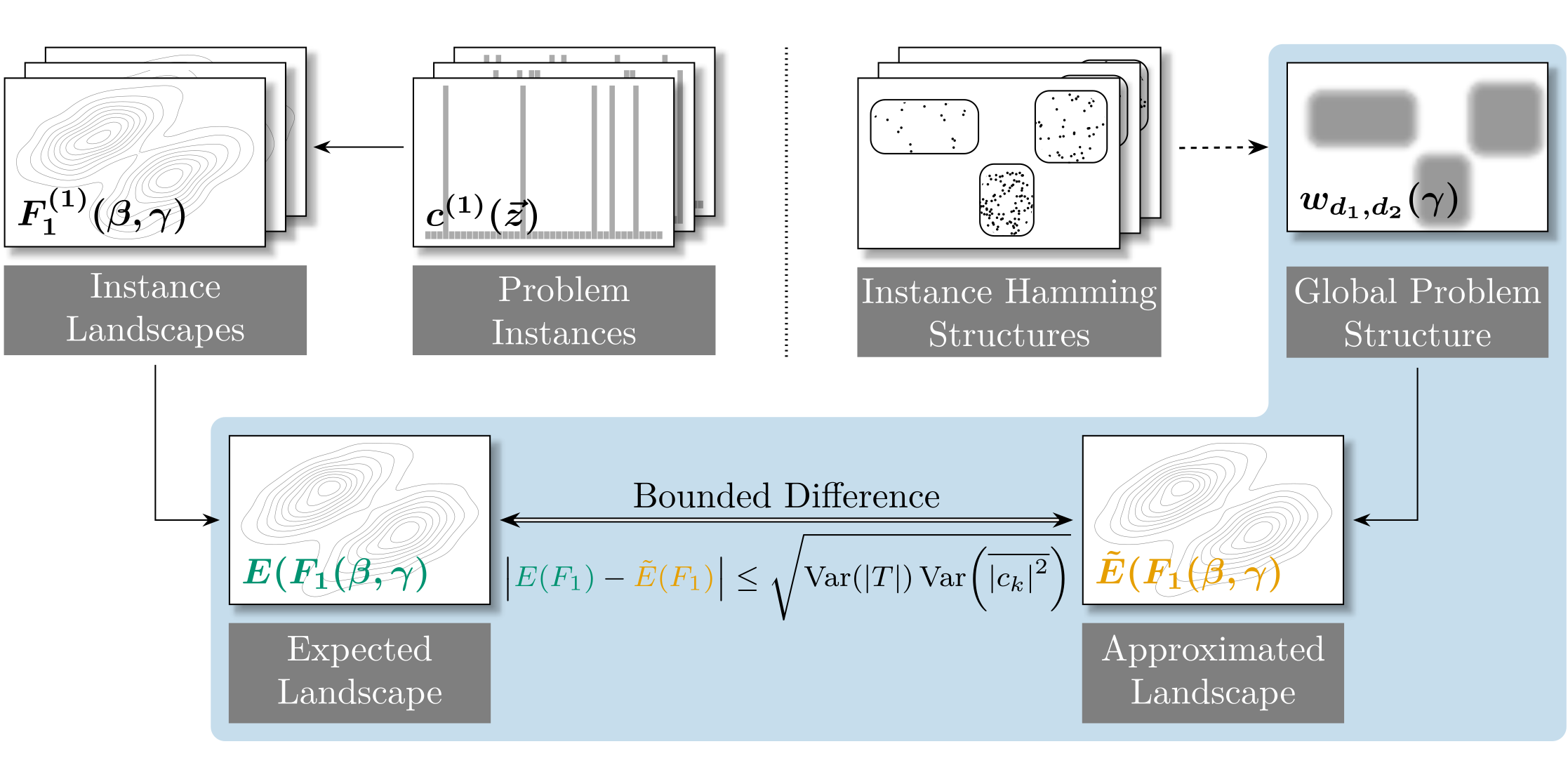

We work towards quantum advantage on gate-based

quantum computers and quantum annealers by designing

integrated quantum algorithms, systems and software.

Contact: Prof. Dr. Wolfgang Mauerer

Systems Engineering

The Systems Architecture Research Group investigates modern architectures for embedded

systems, with a strong focus on OSS components.

Contact: Dr.-Ing. Ralf Ramsauer

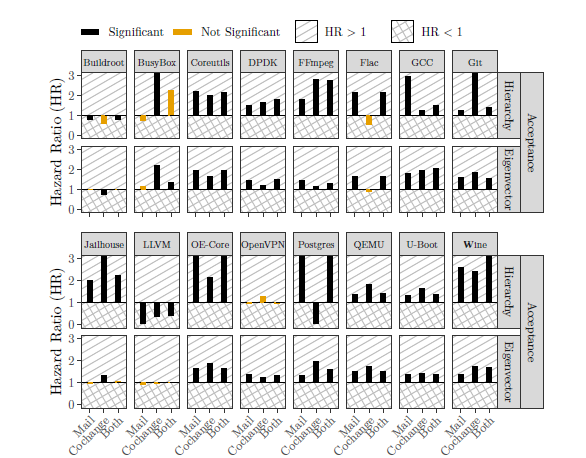

Software Engineering

We further quantum and classical software engineering

by mining quantitative insights using statistics

and machine learning, with a particular focus on reproducibility.

Contact: Nicole Höß (M.Sc.)

News and Trivia

Web Services

Publicly avaliable Services hosted by the Laboratory for Digitalisation:

We're Hiring!

You want to contribute to ongoing research at the Laboratory for Digitalisation? Check out our list of Open Topics, or drop us an email, if you find none of the presented theses ideas interesting, or you have this one brilliant idea you want to pursue.

Events

Upcoming

There are currently no upcoming events scheduled. Check back at a later time.

Past Events

Wolfgang Mauerer contributes a talk at "Quantum Technologies for Fundamental Physics: Global Networks" of NSF.

Wolfgang Mauerer participates at the 1st PhD School on Quantum Software Engineering (QSE), which will take place May 11-13, 2026, in Jarandilla de la Vera (Spain).

Wolfgang Mauerer gives a plenary talk at the IOP Publishing workshop on "Progress in Quantum Machine Learning" at Tsinghua University, Beijing, China.

Wolfgang Mauerer participates as panelist in the workshop on Quantum Computing Software Stack of Fraunhofer-Institut at Forum Digitale Technologien in Berlin.

Dominik Köster contributes a keynote speech on the practical application of AI within our AIM-SMEs project and prompting techniques at the 6th AIAMOcamp in Berlin.

Ralf Ramsauer contributes an invited talk on future challenges for systems in quantum-control architectures to WSOS2026 of GI.

As part of our activities in the Münchner Kreis, we receive updates about neutral atom technology at planqc

Dr. Marcel Niedermeier gives a lecture on "Conquering the curse of dimensionality - wie Tensornetzwerke eine Brücke zwischen klassischer und Quantenphysik ermöglichen" at OTH Regensburg.

Nicole Höß presents her work on Threats to Validity in Mining Software Repositories at the Best-Of selection of German software engineering papers at SE2026 in Bern: "Does the Tool Matter? Exploring Some Causes of Threats to Validity in Mining Software Repositories".

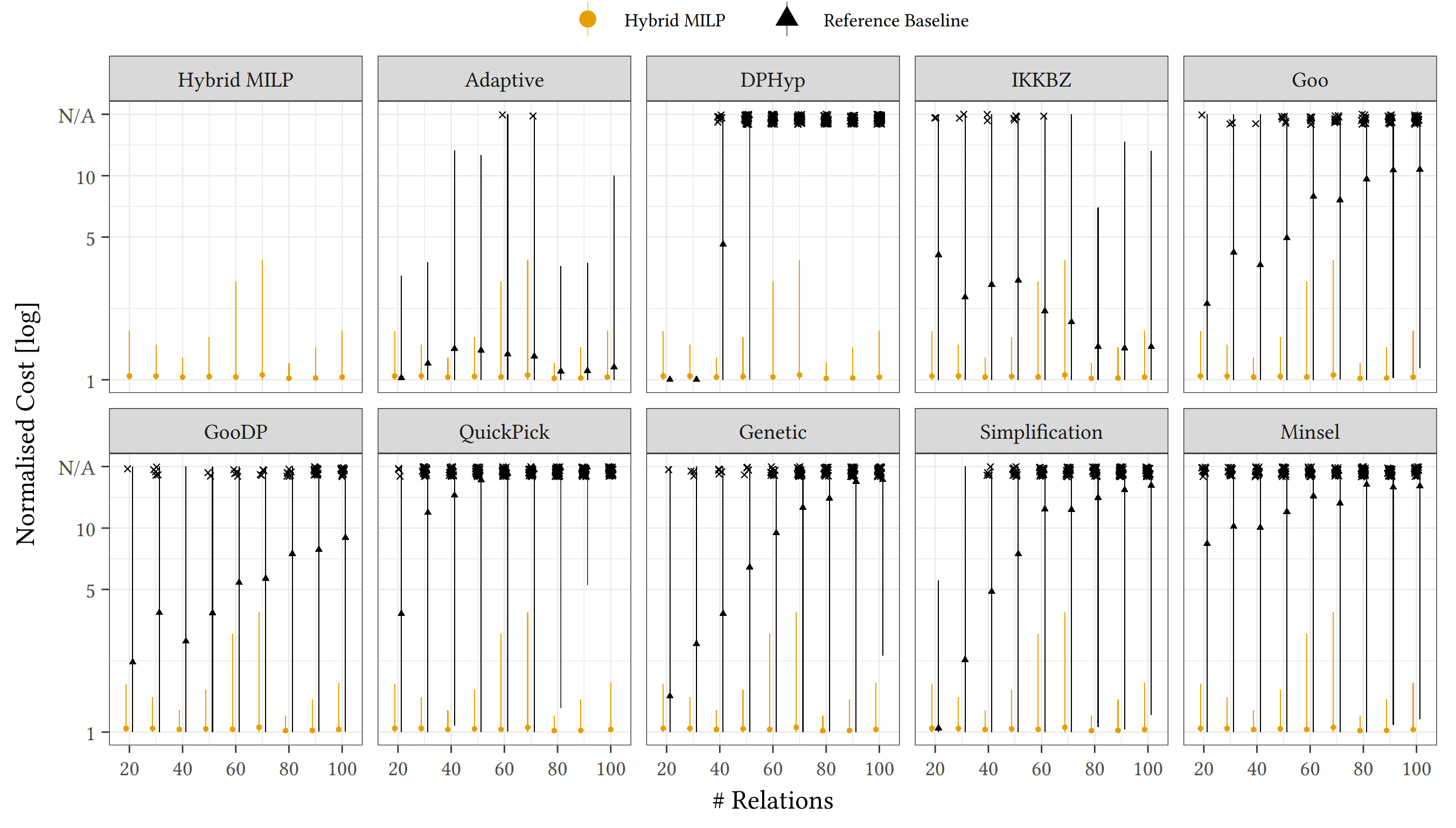

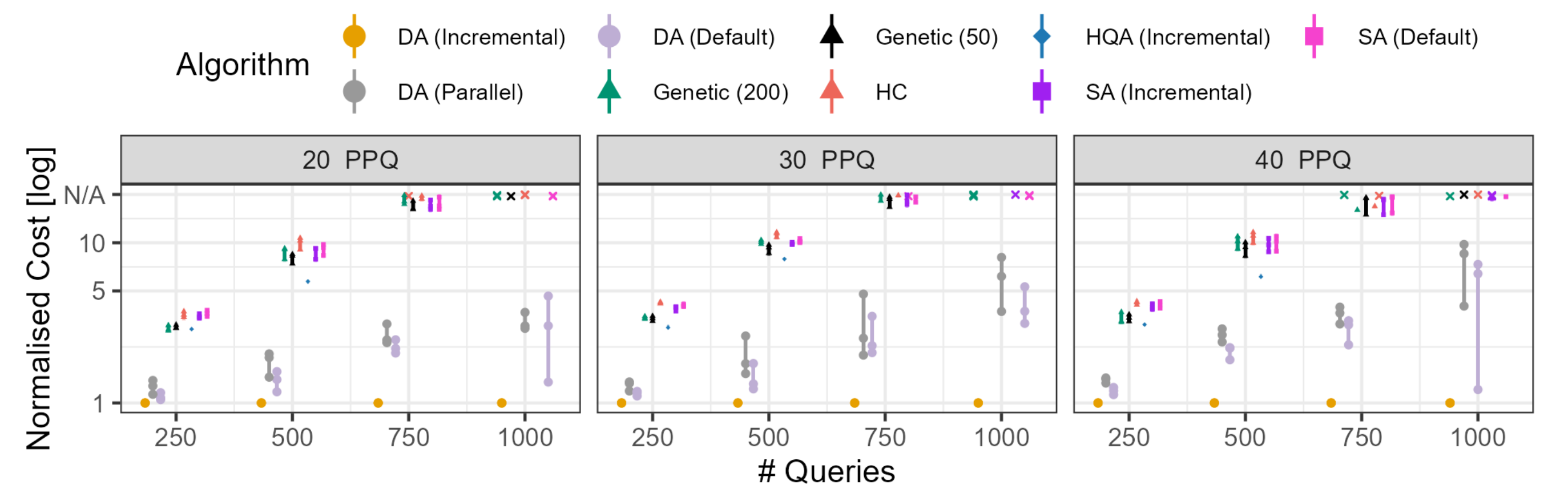

Simon Thelen presents his paper "Predict and Conquer: Navigating Algorithm Trade-Offs with Quantum Design Automation" at the Q-STAV workshop in Bern.

Wolfgang Mauerer will be part of two Examination Committees for thesis defenses of the Ph.D. Program in Computer at the University of Pisa, Florence and Siena, Italy.

Dr. Jonas Rigo (Forschungszentrum Jülich) presents his latest research on Dynamics of Strongly Correlated Quantum Matter with Neural Quantum States" in Room K218 at 10:30h.

LfD Lecture Series WiSe25 5/5: Simon Thelen stellt vor: Zwischen Qubits und QR-Codes: Googles neuer Quantenalgorithmus, um 18:00-19:30 Uhr im K003. Für gratis Getränke ist gesorgt!

LfD Lecture Series WiSe25 4/5: Maja Franz stellt vor: Hype oder Hoffnung? Quantencomputing in maschinellem Lernen, um 18:00-19:00 Uhr im K003. Für gratis Getränke ist gesorgt!

LfD Lecture Series WiSe25 3/5: Oliver von Sicard stellt vor: Preise und Pixel: KI erkennt Prospekt-Pannen mit YOLO, LLM und OpenCV – meistens, um 18:00-19:30 Uhr im K003. Für gratis Getränke ist gesorgt!

LfD Lecture Series WiSe25 2/5: Ralf Ramsauer stellt vor: Vom Prompt zum Treiber: Interaktive Linux Kernelentwicklung mit KI, um 18:00-21:00 Uhr im K003. Für gratis Getränke ist gesorgt!

LfD Lecture Series WiSe25 1/5: Lukas Schmidbauer, Simon Thelen und Maja Franz stellen vor: Das Quantentutorial: Vom Optimierungsproblem zum Schaltkreis, um 18:00-20:00 Uhr im K003. Für gratis Getränke ist gesorgt!

Kickoff of our BMFTR funded project NaQuaMo in Cologne with our valued partners University of Cologne, Siemens Foundational Technologies, and Siemens Mobility.

Kickoff of our BMFTR funded project Q-GeneSys in Munich, together with our valued partners Fraunhofer IIS, IQM, and Siemens Foundational Technologies.

Wolfgang Mauerer contributes a talk on quantum algorithms to the workshop on Opportunities and Risks of Quantum Technologies of the Deutsche Physikalische Gesellschaft.